For NLP, sometimes you want to retain state between sentences in a batch (if the sentences are related in some way) but sometimes you don’t want to retain state. However, there is tremendous confusion with regards to when and how a PyTorch LSTM cell state is reset. Most sources say the 10 sentences in a batch are processed independently and the cell state is automatically reset to 0 after each batch. The PyTorch documentation and resources on the Internet are very poor when it comes to explaining when the hidden cell state is reset to 0. When using an LSTM, suppose the batch size is 10, meaning 10 sentences are processed together. With a TSR problem, the seq_len is the number of numeric values used to predict the next value in the series I recommend that the batch size should usually be 1 (I’ll explain shortly) the input_size is always 1 because each value in the sequence is just one value (no embedding). For NLP, the seq_len is number of words in a sentence batch_size is number of sentences grouped together for training input_size is the embed_dim (number numeric values used for each word). When you call the LSTM object to compute output, you must feed it a 3-D tensor with shape (seq_len, batch, input_size). When you create a PyTorch LSTM you must feed it a minimum of two parameters: input_size and hidden_size. To recap, in NLP you have a seq_len (number of words in a sentence), embed_dim (number of numeric values that represent each word), and a batch size (number of items grouped together for training). Finally, in NLP you are usually dealing with huge datasets and so you almost always place data items together into batches for training. Suppose the embedding_dim is 3, then each word is converted into a vector with 3 cells. Each word has to be converted into an embedding vector. Your data might be like:Įach word is part of a sequence so the four data items shown have seq_len values of 4, 6, 5, 3.

#Time series regression excel movie

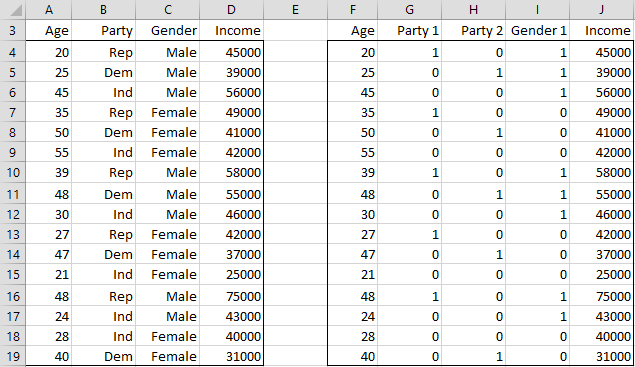

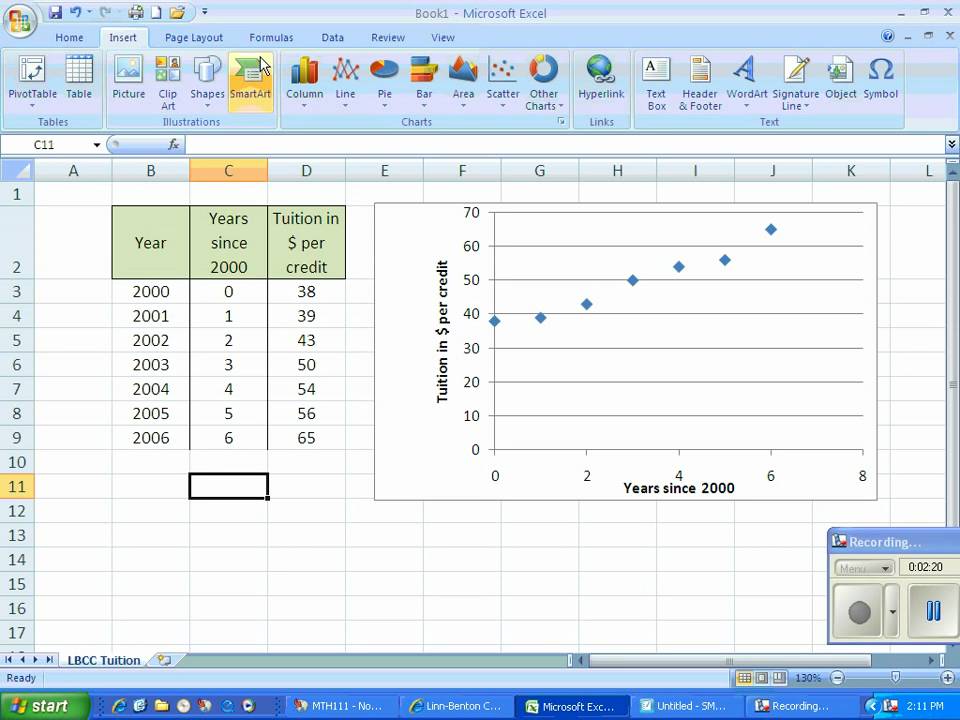

Suppose you are doing NLP sentiment analysis for movie reviews. To create this graph, I printed output values, copied them from the command shell, dropped the values into Excel, and manually created the graph. The time series regression using PyTorch LSTM demo program

#Time series regression excel how to

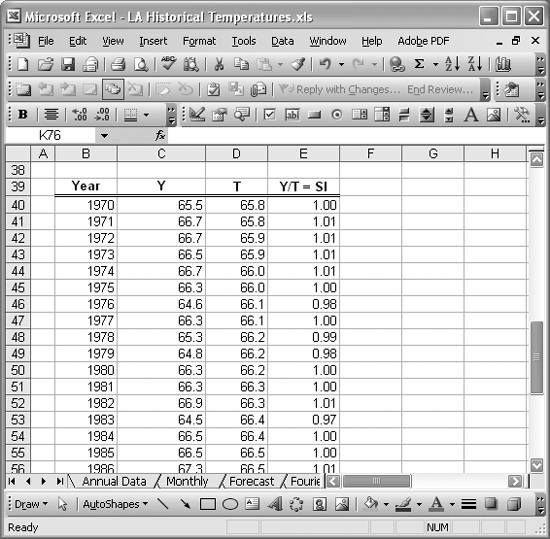

So, the first thing you need to know is how to map an NLP problem to a TSR problem. LSTMs were designed for natural language processing, not TSR. If you divide the data with a sequence length of 4, conceptually you use four consecutive values to predict the next value. The data value is total airline passenger count for the month, in 100,000s. The problem I tackled was the well-known Airline Passenger data set. I found a few examples of TSR with an LSTM on the Internet but all the examples I found had either conceptual or technical errors.

For example, I get good results with LSTMs on sentiment analysis when the input sentences are 30 words or less.

But LSTMs can work quite well for sequence-to-value problems when the sequences are not too long. For most natural language processing problems, LSTMs have been almost entirely replaced by Transformer networks. I decided to explore creating a TSR model using a PyTorch LSTM network.

Implementing a neural prediction model for a time series regression (TSR) problem is very difficult.